As I sat down to write this Quick Theories at a coffee shop, I accepted their WiFi’s Privacy Policy, without fully knowing what was written in the fine print. And I’d venture to guess that you’ve accepted many Terms and Conditions, Privacy Policies, and Service Agreements without knowing at all what you were accepting.

Tim Berners-Lee, the father of the World Wide Web, ranked his top worries for the future of the Internet. His number one concern was that we’ve come to blindly accept the labyrinth terms of service for most technologies. Additionally, giving away control of our personal data.

It’s a major problem that only Artificial Intelligence may be able to solve.

The Problem with Privacy Policy

Honestly, we don’t read Privacy Policies because they aren’t made for us. They’re created for their authors – the companies. Packed with legal jargon to save their butts in case of emergency and also grant them access to our data.

For instance, Apple is famous for their behemoth 20,699-word iTunes Terms and Conditions. Millions of people have accepted their terms without the slightest clue what it entailed.

In part, that’s why R. Sikoryak turned the iTunes Terms and Conditions into a 94-page graphic novel. As a result, thousands bought the novel and learned about the contract they once signed.

Perhaps what’s most concerning about these marathon-length contracts is that literally anything can be slid into them without our knowing.

Purple, a UK WiFi hotspot provider, hid a “Community Service Clause” into its service agreements. 22,000 people at coffee shops and restaurants across the UK agreed to 1,000 hours of menial labor (this includes cleaning local parks of animal waste, cleaning portable lavatories at local festivals and events, scraping chewing gum off the streets, and more) when they checked the box to use Purple’s WiFi. Thankfully it was a gimmicky ad campaign meant to spread awareness of the power of these public contracts.

The moral of the story is that many of the terms of service we agree to on a weekly basis require a high-level of legal knowledge and lots of time to understand. For this reason, companies easily get the upper hand of the deal.

Agreeing to Facebook’s Terms of Service grants them permission to track your activity across the entire Internet. Agreeing to Gmail’s Terms and Conditions allows them to read all of your emails and deliver ads based on your conversations. You’re giving away your identity in the Terms of Service.

Ideally, if more of us understood the terms, we could band together and demand better terms. And that’s where AI is coming in.

Policing Policies with Polisis

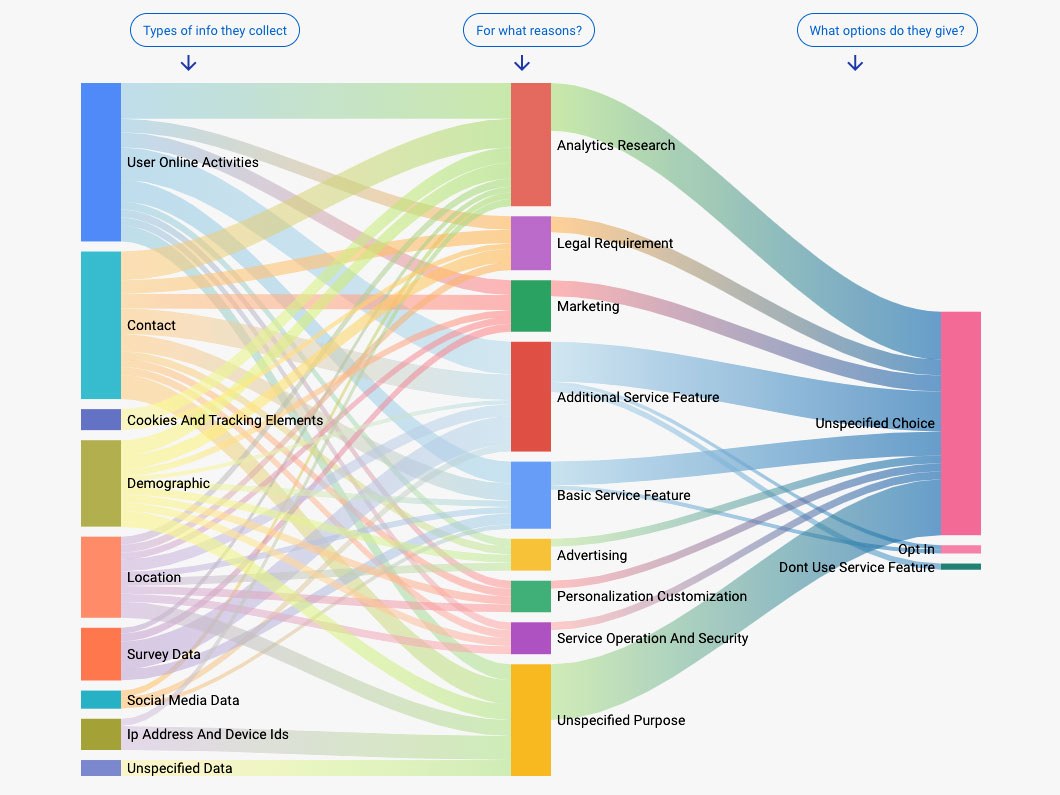

Researchers at Switzerland’s EPFL, the University of Wisconsin, and the University of Michigan announced the release of Polisis. Short for “privacy policy analysis”, Polisis is a website and browser extension that uses machine learning to automatically read and make sense of any online service’s privacy policy, so you don’t have to.

In just 30-seconds, Polisis extracts a readable summary of a privacy policy it’s never seen before. Best of all, the summary is displayed in a graphic flowchart outlining what kind of data a service collects, where that data could be sent, and whether a user can opt out of that collection or sharing.

Here’s what the Polisis AI pieced together for Pokemon Go’s terms of use:

Just like Tim Berners-Lee, the researchers at Polisis want us to at least know what we’re giving away in technology service agreements. Especially since most people don’t understand the magnitude of this problem.

There are potentially hundreds of apps, services, and websites you’ve used or visited that required you to check a Terms and Conditions box. More likely than not, they had a clause in there allowing them to invade your privacy, track your activity, collect information, and use your data for their benefit.

Your Digital Identity, which encompasses all the data of your online activity, may be one of the most valuable assets to your future.

If you’re just now coming to terms with this fact, it’s all right. There’s never been a better time to begin protecting your Digital Identity. That’s why I’ve built a resource to help you, which you can check out here: Digital Identity Series.

With your ambition and tools like Polisis, we can regain control of our own data.

Dwell on the Good for Once

Thanks to the media and our craving for fearful stories, we all tend to focus on the negative AI narratives. Honestly, most Artificial Intelligence initiatives that make it to the mainstream media are “job-threatening” or “life-as-we-know-it-altering” technologies.

However, this isn’t the whole story. Polisis is a great example of AI that will augment our lives in a positive way. It’s a tangible, beneficial resource that isn’t killing jobs or changing life forever. And I think this is great commentary on general human behavior.

So often we allow our days to be ruined by something bad. Seems like we are always dwelling on the negative. The waitress that took forever to serve our food. The co-worker that screwed up an assignment and inconvenienced our perfectly planned day.

But, why don’t we ever let something good ruin our day?

Why not dwell on the person that kindly let you change lanes ahead of them? How about the coffee barista that took your order with a great big smile?

These occurrences are just as “insignificant” in size as the negative occurrences. However, we routinely allow the negative to control our mental space.

Make a change and dwell on something good that happened today. Your mind could use the uplift.

I hope you enjoyed this week’s Quick Theories. Please shoot me your thoughts on Privacy Policies, AI, or anything in your head that you can’t wait to get out.

Your blog is a great source of positivity and inspiration in a world filled with negativity Thank you for making a difference